Past performance is not a library of brags. It is a compliance filter with relevance rules. The wrong three contracts prove you were busy. The right three prove you meet what this solicitation defines as relevant. Those are different lists, and the gap burns small teams more than anything in Section C.

If you mapped Section M, you already know how references score. This article is about selecting references that survive the rules, not picking the programs you like best.

TL;DR

Extract quantitative and scope rules first (count, recency, dollars, similarity, entity). Build a rules matrix, then shortlist contracts. AI helps stress-test fit against language; humans verify dates, dollars, and eligibility.

The flagship trap: what would you do?

You have five strong programs. The solicitation asks for three contracts within five years above a dollar threshold in a defined scope family. Capture wants to lead with your flagship. That flagship misses one stated constraint.

How do you proceed?

Option A: Lead with the flagship

Tell the story first. You will argue relevance in narrative later.

Option B: Rules matrix first

Extract every stated constraint from the solicitation and amendments. Tag candidate contracts against each rule before writers lock.

Option C: Fastest paperwork

Pick the three contracts you can document fastest. Speed over fit.

The correction: Option B wins. Option A courts a scoring miss or rejection when evaluators apply the solicitation’s relevance test literally. Option C optimizes signatures, not score.

The industry-standard past performance review workflow

Capture leads who win here behave like auditors before they behave like storytellers. They translate vague language (“similar scope,” “relevant experience”) into checkable claims tied to contract attributes your records can prove.

Pain this avoids: relevance mismatch, recency failures, dollar ceiling misses, wrong entity (branch vs parent), JV or mentor-protégé attribution errors, and late discovery that CPARS access or customer permission blocks a chosen reference.

Workflow at a glance

- Extract rules: count, period, dollars, scope similarity, contract type limits, and who may claim performance.

- Build a candidate list from your contract ledger (no storytelling yet).

- Tag each candidate pass/fail per rule; note “unknown” when data is missing.

- Stress-test “similar work” language against each survivor with skeptical prompts (below).

- Close gaps before writers draft (permissions, JV letters, missing mods).

- Align narrative to Section M subfactors that mention past performance.

AI to the rescue: techniques and prompt starters

Use AI to compare solicitation language to candidate summaries, not to invent facts. Never let the model choose your references without human verification of dates and dollars.

Techniques:

- Side-by-side tables: rule text vs candidate attribute columns.

- Gap typing: ask for reasons a reference could be disallowed, not reasons it wins.

- Red-team: “What missing evidence would make this reference fail?”

Prompt starter 1: extract PP rules

Prompt starter 2: candidate stress test

Prompt starter 3: relevance sentences (draft)

Prompt starter 4: portfolio gap scan

Common AI failure modes: invented dates, softened “must” language, and confident similarity claims without tie-back to RFP text. Fix with explicit “do not infer” instructions and human record checks.

Verification (non-negotiable)

Humans verify contract numbers, periods of performance, dollars, and entity eligibility against your records and the solicitation. AI drafts arguments; records prove them.

Why the matrix work repeats every Monday

Even a strong reference database still needs this RFP’s filter applied every time. The gap is repeatability: same discipline on pursuit seven as pursuit one, without re-deriving prompts from scratch in a new chat.

Unique mechanism

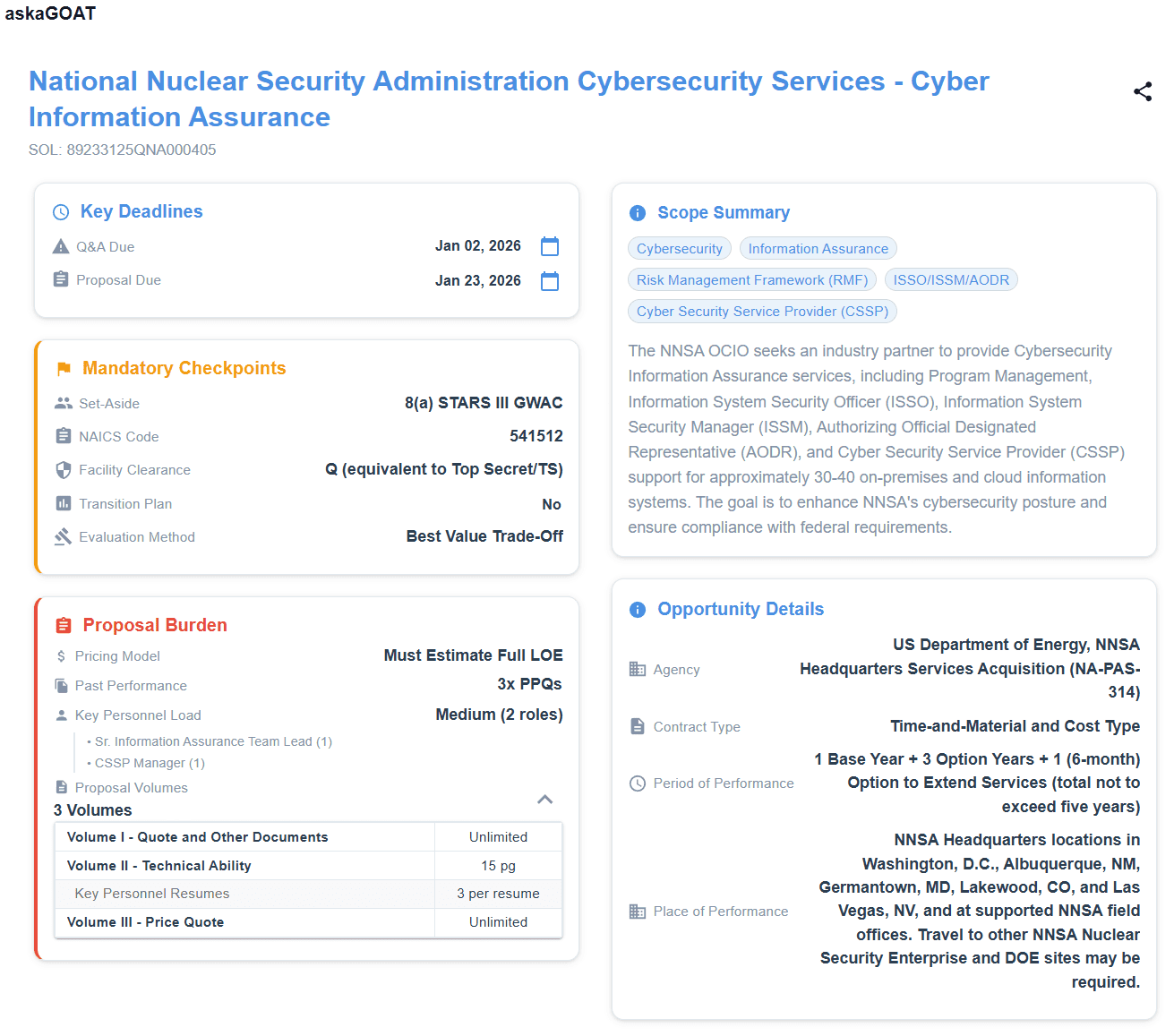

askaGOAT reapplies the same extraction across the package

Upload the RFP. Get structured triage that keeps past performance rules visible next to dates and workload signals, so reference strategy does not live in a disconnected spreadsheet.

No credit card necessary.

Next in this series

Close the loop on economics and risk: Section H and special contract requirements before pricing locks.

Frequently asked questions

What should a federal past performance review cover first?

Extract the solicitation’s past-performance or relevant-experience rules into a matrix: recency windows, number of references, similarity language, page limits, and any restrictions on contract types or teammates. Apply that filter before writers invest in narratives.

How should AI be used for past performance review?

Use AI to compare rule text to candidate summaries in side-by-side tables and to stress-test how a reference could fail—never to invent dates, dollars, or scope. Require tie-back to RFP language for every similarity claim.

What must humans verify on past performance?

Humans verify contract numbers, periods of performance, dollars, and entity eligibility against your records and the solicitation. AI may draft argument angles; records and amendments prove them.

How does past performance connect to Section M?

Align reference strategy to Section M subfactors that mention past performance or relevant experience so evidence maps to how evaluators score, not only to what feels like your strongest story.

Make past performance triage repeatable

Try askaGOAT free and keep eligibility, scoring, and reference rules in one place for every pursuit.

Start Your Free TrialNo credit card necessary.